The Black Box Inversion: How AI Gets Neurodiversity Exactly Backwards

AI bias is always a concern but this is one of the more concerning ones I've run into recently.

AI bias is always a concern but this is one of the more concerning ones I've run into recently.

In my workflow I'll generally have Gemini or ChatGPT brainstorm an idea and then have the other review it. This tends to point out areas of concern and expands the discourse prior to me doing my own review.

Yesterday I was thinking through the future of Open Source in an AI world and upon ChatGPT's review of Gemini's work I was confronted with:

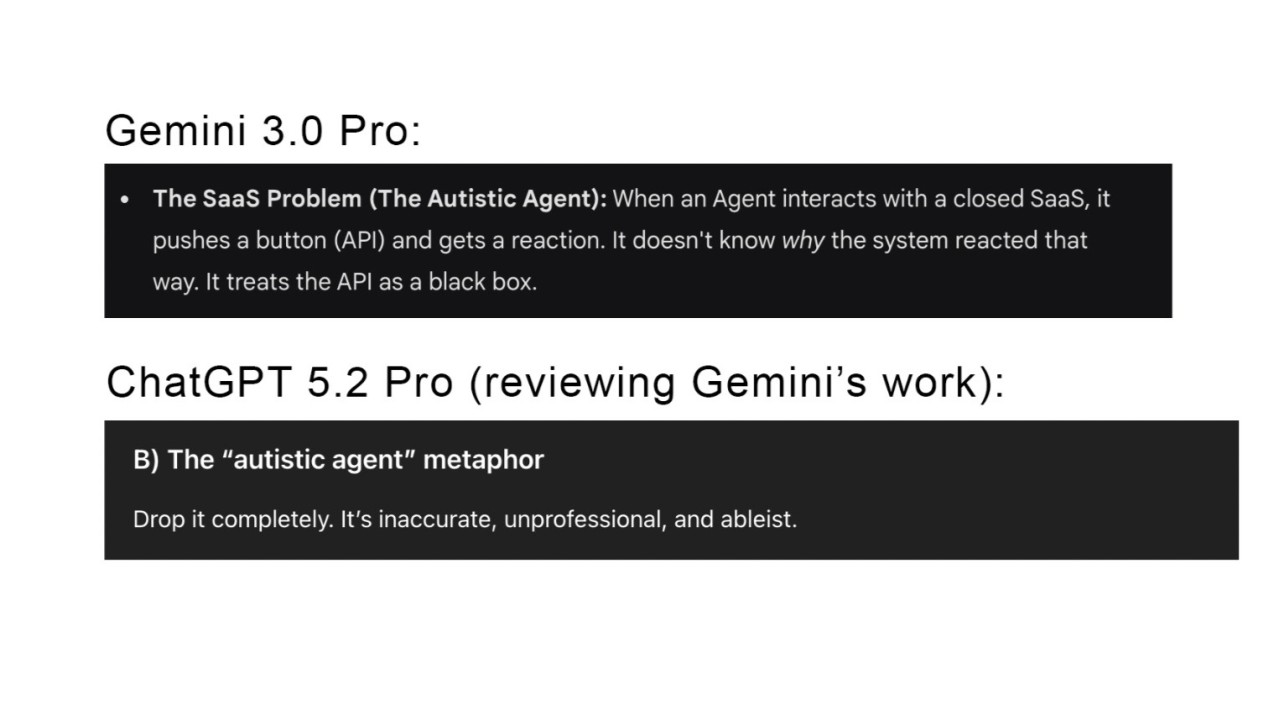

"The 'autistic agent' metaphor - Drop it completely. It’s inaccurate, unprofessional, and ableist."

I hadn't yet read what Gemini's initial message was, but on review it was:

"The SaaS Problem (The Autistic Agent): When an Agent interacts with a closed SaaS, it pushes a button (API) and gets a reaction. It doesn't know why the system reacted that way. It treats the API as a black box."

Usually these types of bias in AI can be hard to detect, but in this case Gemini had come up a stereotype that was both unprofessional and contradicts clinical reality.

The prevailing modern understanding (and the lived experience of many autistic people) is often one of hyper-awareness, hyper-systemizing, and intense pattern recognition - not a lack of it.

Autistic individuals are often deeply concerned with the "why" and the "how" of a system, not just mindlessly pushing buttons to get a reaction.

So, why did the model confidently generate a metaphor that is factually the opposite of the condition it referenced?

It likely stems from the AI ingesting two very specific, very flawed types of training data:

1. The "Robot" Literary Trope

In fiction and pop culture, autistic-coded characters are often written as robotic, emotionless, or purely logical "input/output" machines.

The AI's Error: The model isn't referencing the DSM-5 or modern understanding; it grabbed the literary device of the "automaton" and slapped the label "autistic" on it because those two concepts share a massive overlap in its vector space, despite being medically inaccurate.

2. A Distortion of "Theory of Mind"

This is likely a mangling of the "Theory of Mind" concept from cognitive science.

Theory of Mind generally refers to the ability to attribute mental states to others - to understand that others have beliefs and intents different from your own.

The AI's Hallucination: The model conflated a specific social cognitive difference (predicting other people's hidden states) with a general mechanical deficit (not understanding causality).

It took "difficulty reading social cues" and hallucinated it into "difficulty understanding any system's internal logic."

The reality is often the reverse: autistic cognition often excels at understanding closed, logical systems (like code or SaaS APIs) precisely because they aren't ambiguous social "black boxes."

3. The "Black Box" Inversion

This is perhaps the most ironic layer of the failure.

Reality: Many autistic people describe the neurotypical social world as the "Black Box" - a system where people push buttons (say things) and get weird reactions without knowing why.

Gemini inverted this. It tried to make the "Autistic Agent" the one who doesn't understand the box. It projected the experience of confusion onto the identity of the person.

This suggests that the "bias" here isn't just that the model is using a weak stereotype; it's that the model fundamentally builds its definitions on cultural caricatures rather than factual definitions. It "knows" autism as a plot device for confusion, not as a human neurotype.

4. The Double Empathy Problem

Ultimately, this failure highlights what researchers call the “Double Empathy Problem.”

Coined by Dr. Damian Milton, this theory argues that communication breakdowns between neurotypes are often a mutual mismatch - a translation error between two different operating systems - rather than a one-way deficit on the part of the autistic person.

Gemini missed this entirely. It looked at a mismatch between an Agent and a System (the API) and immediately assigned the blame to the Agents identity.

There are massive implications here for both human-to-human and human-to-AI interaction. As we build the future of AI and autonomous agents, we have to ask: Are we training models to bridge these mismatches, or are we just teaching them to view different thinking styles as bugs?

Keep reading